All research is not created equal. Just because a study is published in a journal, even a reputable one, does not mean it is reliable. In fact, there is research to show that the quality of the study and prestige of the journal have an inverse relationship. What should you look for in finding a reliable source?

Trust and confidence in media is near an all-time low, with 44% in the United States, 52% in Canada, and 49% internationally indicating that they believe in the quality of information they are getting.[1] The scientific community’s integrity has been called into question.[2]

Consequently, sentiment by keyboard warriors touting the need to “do your own research” is near an all-time high. If we are looking to do our own research, what sources can we trust?

The ranking of the world’s scientific journals is taken very seriously.[3] As such, one would assume a higher ranking equates to a trustworthy source. But a more in-depth examination of the reliability of articles published in highly ranked journals suggests reputation alone does not guarantee high-quality research.[4]

To be able to stand behind our resources confidently, we need to take a closer look.

Don’t believe the hype

The most widely used and accepted method to rank scientific journals is called the “impact factor” (IF). The IF is, more or less, based on citation frequency by fellow scientists in their field. Of course, a ranking system based on citations alone has many identifiable flaws, as it is almost completely subjective. It does, however, offer a measurable base and a sense of general scientific community sentiment, which is a good place to start.[5]

A 2018 review of the quality of articles being published in journals around the world[6] juxtaposed with their ranking in the Scimago Journal & Country Rank database, revealed significant findings regarding studies and reliability of findings:

- Retractions & error detection – Lower-ranked journals have an article retraction rate four times higher than more prestigious journals. At first blush, this seems an obvious indicator of a lesser product. However, if we adopt the assumption that higher-ranked journals have a larger and more discerning readership, and thus should come under greater scrutiny from peers, the opposite, or at least parity, should be expected.

- Analysis of methodology – Examination of in-vivo animal experimentation methods and processes illustrated that even foundational principles such as blind outcomes and randomization were more often ignored in journals that ranked higher than their lower-ranking counterparts.

- Reproducibility – A significant checkpoint for assessing the reliability of findings in a journal article is another researcher’s ability to reproduce its results using identical methodology. When journals on each end of the spectrum were reviewed, no significant difference was found between the reproduction success rates of more esteemed journals versus those with lower profile.

What separates a high-quality source from a conveyor of junk science?

Unless we are trained at reading journal articles, chances are when we are searching for evidence of a scientific claim we look at the end paragraph of the abstract. There is no shame in it, assuming we are having a casual debate with friends (or strangers). We might be keen to just pick a “reputable” journal and call it a day. If we want to be taken seriously, however, there is more to it.

In a world in which journals are trying to sell subscriptions and scientists are racing to make the next big breakthrough and a name for themselves, methodology is king.[7]

Most of us have heard the terms double blind, peer-reviewed, meta-analysis, replicable results, and other catchy words in reference to “good science”. Other things that we should look for include lengthy, specific titles, authors being affiliated with a university (rather than freelance), and presenting an original research study with data and analysis of findings.[8] The structure of the paper, however, is less important than the design and methodology of the study being reviewed.

How to do our own research when we are not scientists

To save the embarrassment of our nemesis du jour discrediting our source, here are three short checkpoints that will increase odds of citing a reliable study:

How long ago was the research published? Anything older than 10 years is suspect. Equipment, technology, processes, and knowledge change quickly. Though there is still value in older research, chances are there is newer research on a topic of interest. It just might not align with our current belief paradigm.

- How long is the title of the article? Size isn’t everything, but a lengthier title suggests a more refined, specific, and intentional research question. A specific question has a specific answer, which is associated with greater confidence in the findings.

- Is it peer-reviewed? When an article is peer-reviewed, it means other scientists with formal training and expert knowledge in research methodologies and statistical analysis have reviewed the study, giving us peace of mind that the study has already gone through some scrutiny. It can be hard to determine which studies have been subjected to a peer review, but it helps to look for a received date and accepted date. This suggests there was a delay in publication, while the peer reviews were happening.[9] Does peer-reviewed mean the results should be taken as gospel? Absolutely not. But a peer-reviewed journal article, other factors being equal, has greater credibility than one that is not.

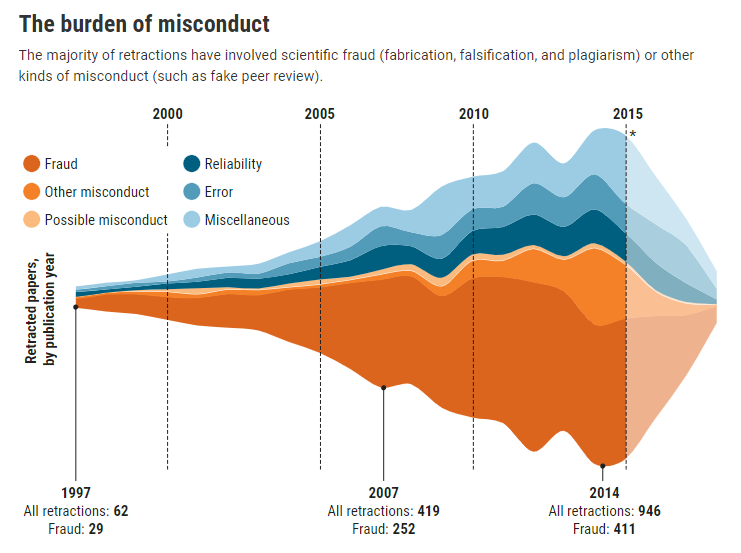

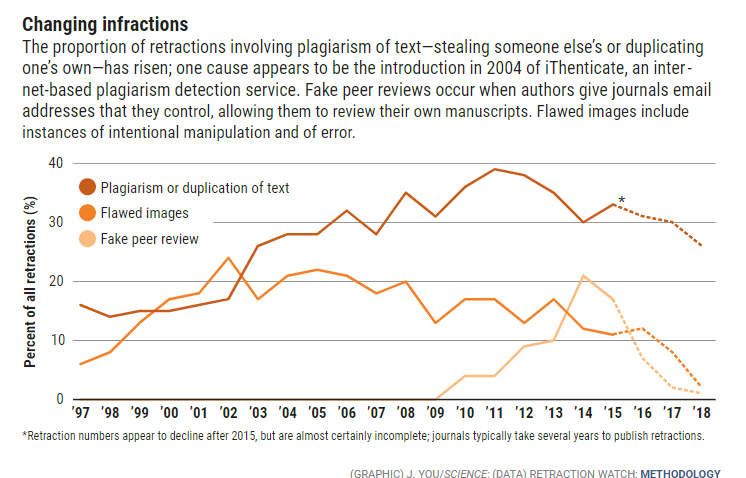

- Now that we know a little more about how to conduct valid research, we can share content with an open mind. Journal articles are debunked regularly and with increasing frequency (graph).[10]

Research is not fact; it is an educated indication that there may be a strong correlation between two variables, based on study or experiment. Data and journal articles are so readily accessible in today’s technologically driven world that it is easy to find “science” that “proves” a position on any topic. When we present findings on an open forum, we should expect others to disagree. Rather than being married to our positions and cemented in our beliefs, recognize that there is fault in research and flaw in findings; we should be open to learning rather than defending the findings of a study conducted by a third party we do not know.

“Education is not the filling of a pail, but the lighting of a fire.”

– W.B. Yeats

[1] https://ora.ox.ac.uk/objects/uuid:18c8f2eb-f616-481a-9dff-2a479b2801d0/download_file?file_format=pdf&safe_filename=reuters_institute_digital_news_report_2019.pdf&type_of_work=Report

[2] https://advances.sciencemag.org/content/6/43/eabd4563

[3] https://www.scimagojr.com/journalrank.php

[4] https://www.frontiersin.org/articles/10.3389/fnhum.2018.00037/full

[5] https://akjournals.com/view/journals/11192/76/2/article-p391.xml

[6] https://www.frontiersin.org/articles/10.3389/fnhum.2018.00037/full

[7] https://www.frontiersin.org/articles/10.3389/fnhum.2018.00037/full

[8] https://bowvalleycollege.libguides.com/c.php?g=10229&p=52137#:~:text=Peer%20Review%3A%20The%20process%20by,publication%20in%20an%20academic%20journal

[9] https://bowvalleycollege.libguides.com/c.php?g=10229&p=52137#:~:text=Peer%20Review%3A%20The%20process%20by,publication%20in%20an%20academic%20journal

[10] https://www.sciencemag.org/news/2018/10/what-massive-database-retracted-papers-reveals-about-science-publishing-s-death-penalty

(Brendan Rolfe – BIG Media Ltd., 2021)